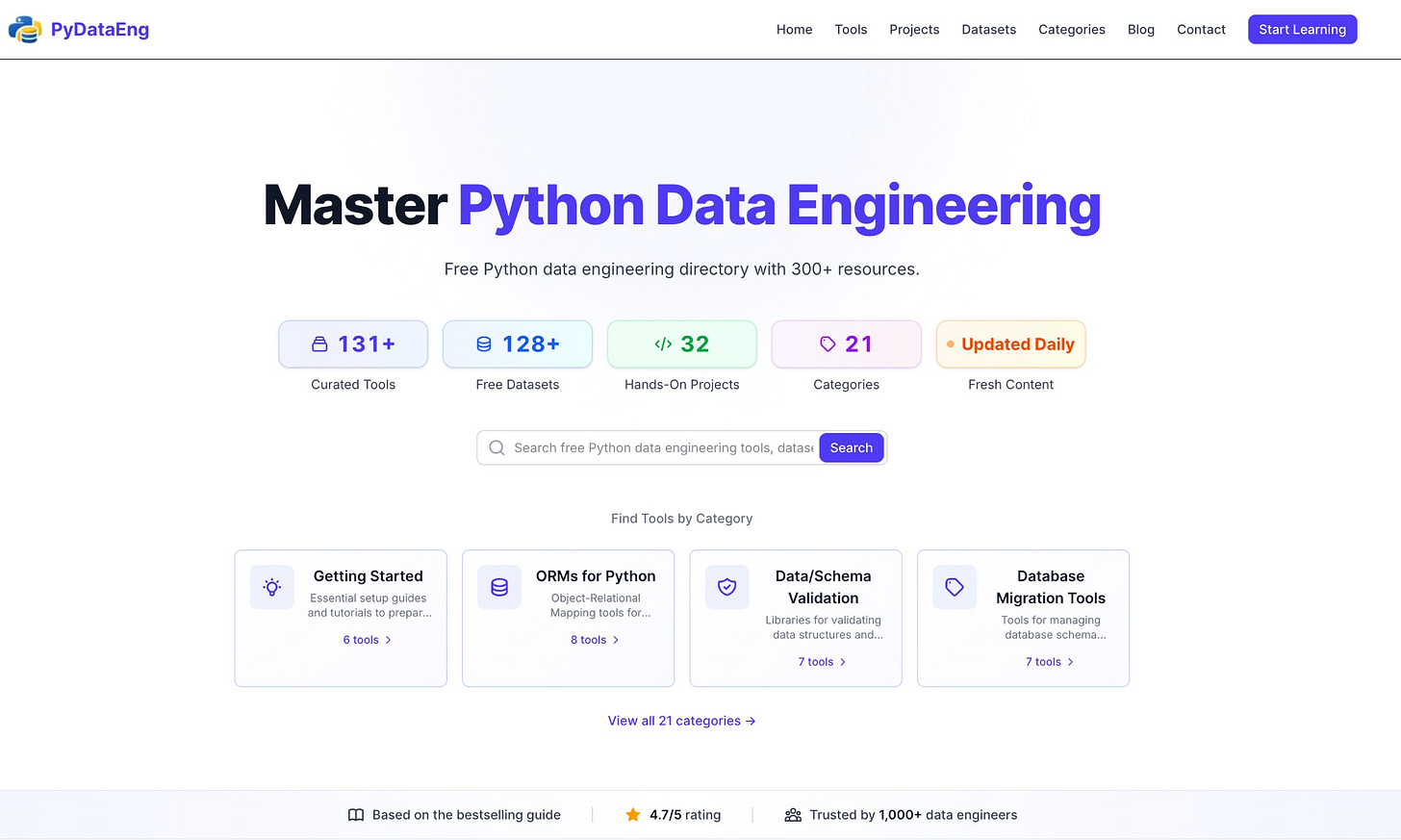

Python Data Engineering Resources - Free Tools, Projects, and Datasets

Everything you need to learn and practice Python data engineering, in one place

If you’re learning data engineering with Python, you probably know the feeling.

One tab with a tutorial.

Another with a GitHub repo.

A Stack Overflow answer from 2017.

A blog post that is already outdated.

You spend more time searching than learning.

I’ve been there. And that’s exactly why I built the Python Data Engineering Directory.

Why I built this

The Python data engineering ecosystem is huge.

Too huge, especially if you’re learning.

There are hundreds of tools, frameworks, and libraries.

Some are popular. Some are niche. Some are just noise.

The hard part is not learning Python.

The hard part is knowing what actually matters and what people really use in practice.

So I decided to solve that problem the best I could.

What the directory is

It’s a free, curated directory of Python data engineering resources.

No paywalls. No premium tier. No upsells.

Right now it includes:

130+ production-ready tools: Pandas, Apache Airflow, dbt, PySpark, SQLAlchemy, and many more.

120+ free datasets and APIs: Weather data, NASA data, GitHub, Reddit, Wikipedia, public APIs.

30+ hands-on projects: Real pipelines with real code. Not toy examples.

20+ clear categories: From basics to orchestration, big data, validation, and deployment.

Every entry is hand-picked.

No random links. No scraping GitHub stars.

I’ve built and maintained data pipelines for many years, and I focused on tools that show up in real production systems.

What problem it solves

When you learn data engineering, you constantly hit questions like:

Should I use Airflow, Prefect, or dbt?

Is PySpark worth learning for my use case?

Which data validation tool should I start with?

What kind of projects actually make sense on a portfolio?

The directory helps by showing:

Which tools fit which job - Batch vs streaming. Lightweight vs heavy. Simple vs scalable.

Projects that feel real - A Pokémon ETL pipeline with Prefect, a weather data pipeline with dlt, or sales analysis with Pandas.

Up-to-date practices - No abandoned libraries. No outdated advice.

Everything is organized so you can see the landscape instead of guessing your way through it.

Who this is for

This is useful if you are a beginner moving into data engineering, a career switcher who wants structure, an intermediate engineer filling gaps, or a hiring manager trying to understand the ecosystem.

You don’t need to know everything.

You just need a clear map.

How to use it

The directory is easy to explore:

Start with Getting Started if you’re new

Go to ETL Frameworks if you want to build pipelines

Check Big Data Processing when you’re ready for Spark

Use Hands-On Projects to learn by building

You can browse, search, or filter by category.

The bottom line

Learning Python data engineering shouldn’t feel chaotic.

You shouldn’t have to guess which tools matter.

You shouldn’t waste hours digging through outdated content.

This directory exists to save you time and give you clarity.

It’s free, practical, and built with real-world use in mind.

If you’re learning or working with Python data pipelines, it might be worth bookmarking. I hope it will serve you well.